Hello, fellow chatbot creators! If you are diving into the world of AI and want to run your own models locally, you have probably heard two big names: Ollama and GPT4All. Both are fantastic tools that let you chat with AI without sending your data to the cloud. But which one should you choose for your chatbot project?

It is not about which one is “better,” but which one is better for you. Let’s break down the key differences in a simple, non-technical way.

Model Selection: A Giant Library vs. A Curated Collection

Think of Ollama as a giant, endless library. It has an incredible number of AI models available. You can find small, efficient models with just 1 billion parameters (great for simple tasks) all the way up to massive, powerful ones with hundreds of billions parameters (like deepseek-r1:671b). This high availability of models is Ollama’s superpower. If you want to experiment with the latest and greatest model that just came out, chances are, Ollama supports it.

GPT4All, on the other hand, is more like a carefully curated bookstore. It has a less number of supported models compared to Ollama. The team behind GPT4All selects and optimizes models to work perfectly within their ecosystem. This is less confusing for beginners. You don’t have to spend hours picking a model; you can just start with the recommended ones.

For you, this means: If you are a tinkerer who loves trying different models, Ollama is your playground. If you prefer a “just works” setup without too many choices, GPT4All is more comfortable.

Power vs. Practicality: The Parameter Puzzle

We mentioned parameters (like 7b, 13b, 70b). In simple terms, more parameters usually mean a smarter, more knowledgeable model. Ollama clearly wins in the power race here. Its ability to run huge models means you can get answers that are very close to what you’d expect from a cloud service like ChatGPT, provided you have a strong enough computer.

GPT4All focuses on models that run well on regular computers, even without a powerful graphics card. Their models typically have fewer parameters, making them faster and less demanding on your hardware. You trade a bit of that top-tier “smartness” for speed and accessibility.

For you, this means: Do you have a powerful gaming PC or Mac and need the best possible answers? Lean towards Ollama. Are you working on a standard laptop and value speed and stability? GPT4All is a safer bet.

The Ease of Talking to Your Files: RAG Showdown

This is a big one for many creators. RAG (Retrieval-Augmented Generation) is a fancy term for letting the AI read your own documents (like PDFs, Word files, or text files) to answer questions.

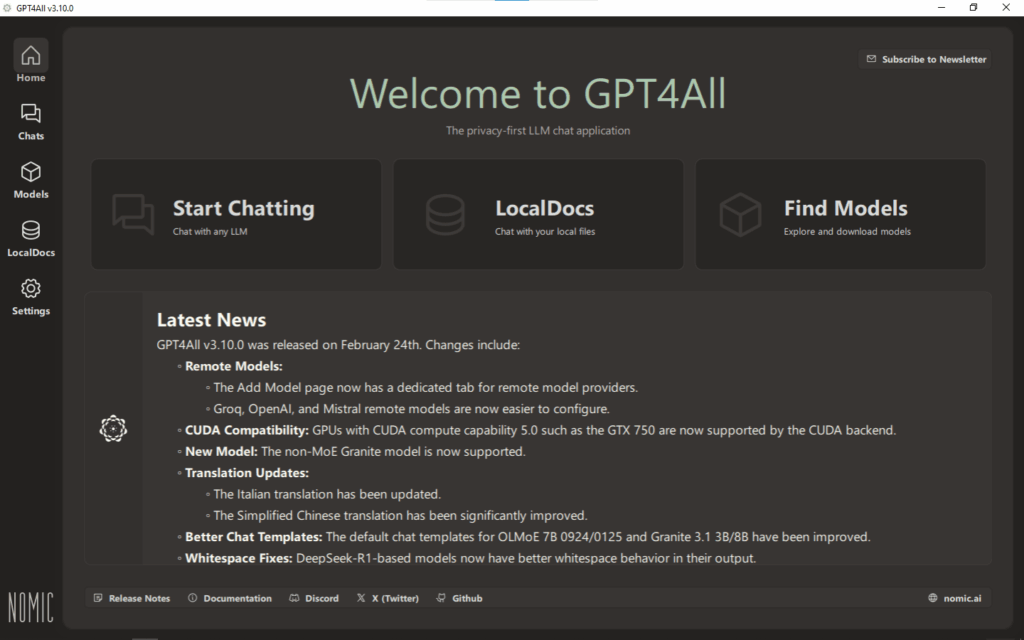

Here, GPT4All is the undisputed champion of ease. Its desktop application has a beautiful, built-in feature called “LocalDocs.” You just point it to a folder on your computer, and that’s it! The chatbot will automatically use the information from your files in its answers. It is incredibly simple and requires no coding.

With Ollama, implementing this kind of RAG functionality is harder. Ollama itself is like a powerful engine, but it doesn’t have a built-in car. You need to build the rest of the “car” yourself using code (like Python scripts and other libraries) to connect your documents to the AI. It’s flexible, but not beginner-friendly.

For you, this means: If your main goal is to create a chatbot that can answer questions based on your personal documents with zero coding, GPT4All is the only choice. If you are a developer who wants to build a custom document system, Ollama gives you the tools.

Other Important Differences for a Chatbot Creator

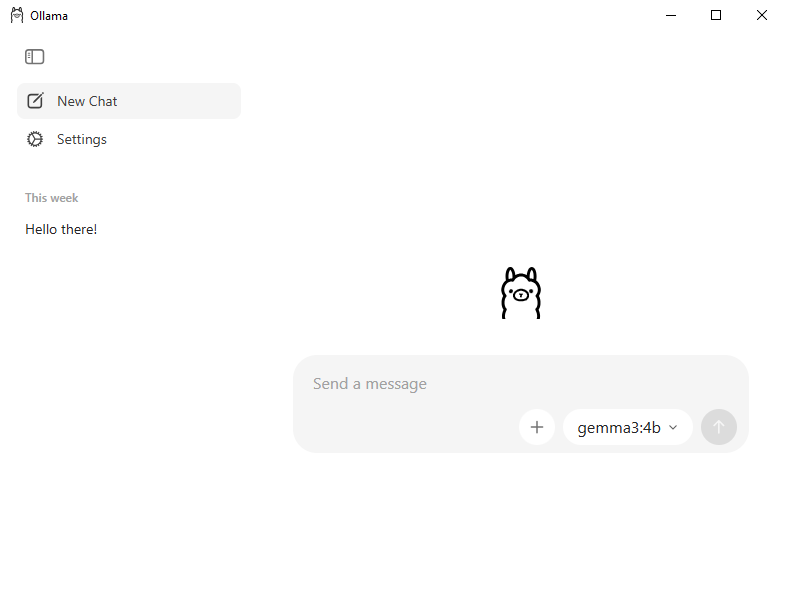

- User Experience: GPT4All offers a clean, all-in-one desktop application. You download, install, and you’re chatting. Ollama is primarily command-line driven, which can feel intimidating. However, many third-party user interfaces (UIs) are being built for Ollama, which is expanding its accessibility.

- Community & Ecosystem: Ollama is exploding in popularity, especially among developers. This means there is a huge and growing community creating guides, tools, and integrations for it. GPT4All also has a strong community, but it feels more focused on end-users rather than builders.

The Final Verdict

So, which one is your perfect local AI Tool?

Choose Ollama if: You are a power user or a developer. You want access to the widest variety of models, including the most powerful ones. You don’t mind using the command line and are willing to write code to add features like document reading.

Choose GPT4All if: You are a beginner or a non-technical user. Your priority is to get a chatbot running quickly that can easily chat with your local documents. You value a simple, straightforward desktop application over raw power and customization.

Both Ollama and GPT4All are amazing projects that empower us to bring AI into our local machines. Your choice simply depends on what you want to build and how you want to build it. Happy building!